Let’s Fund Some Huge Prizes In AI Safety

Preparing for the largest nonprofit windfall in history.

This is a lightly edited (mostly expanded) version of my submission to the Dwarkesh’s blog prize.

To make AI go well, we must solve “AI alignment”, cooperate under competitive pressure, and ensure AI benefits everyone.

To belabor the point, AI safety is about to face the largest nonprofit windfall in history. The OpenAI Foundation’s stake in OpenAI is now worth $180bn. Anthropic cofounders have pledged 80% of their wealth. Those AI companies tend to triple in valuation every year, so hundreds of billions may be an undercount. And it might be liquid as soon as this year! Coefficient Giving expects to move ~$1bn in global catastrophic risk grants in 2026 (the thus far largest funder of AI safety) and, not to be outdone, plans on deploying scaling much more.

The traditional grantmaking approach is to fund existing organizations. Yet, we cannot possibly spend hundreds of billions that way: vetting is slow, existing organizations can’t scale. Even if we decided to fund every reasonable-looking org (METR/Transluce/RAND) to hundreds of millions a year, try desperately to seed new ones, and run a regranting program that puts the Future Fund’s one to shame, that closes maybe $20bn of the gap. What about the rest?

I’d argue that we should fund some massive, public prizes: grand prizes for full problems, sub-prizes for intermediate milestones, and tooling grants (compute, tokens, datasets) for competitors. The work can begin immediately: though OAI and Anthropic equity is mostly illiquid, prizes can pay out as liquidity arrives.

AI Alignment

Start with alignment. Indeed, I can already hear Eliezer Yudkowsky objecting: “Your alignment prize is doomed. You have ‘one critical try.’ Your toy solution proves nothing.”

Yet, alignment can be broken into -- still incredibly difficult -- subproblems that are easier to verify progress on: adversarial robustness, formal verification, full mechanistic interpretability.

On a tiny model, we can (without superintelligence) verify whether your solution survived an adaptive white-box automated attacker, produced a closed-form proof bounding the model’s behavior, or fully reverse-engineered the model’s internals. Then follow with prizes for reducing the “alignment tax” until they can be deployed at frontier-scale.

Heck, while you’re at it, go 10x or 100x bug bounties for finding jailbreaks (those prizes on Grayswan are looking awfully small), and fund all interesting demonstrations of model organisms. And this may be harder to verify, but fund prizes for creating agendas and strategies to hand off to the future automated alignment researchers and answer some of those hard questions.

We can damn well make sure that this “one critical try” is slated in our favor.

Cooperation Amid Competitive Pressures

Incentivizing cooperation is a harder, fundamentally sociopolitical problem. Tax-exempt nonprofit rules close off the obvious lever of large-scale political spending for regulation and diplomacy (though wink wink, Anthropic cofounders).

Prizes work differently here: they reduce technical friction to cooperation. In general, I think this is a great target for many technical researchers otherwise in crisis because they aren’t in a lab. Political will, when it does happen, can only reach for tools that exist. As Dean Ball tweets, political winds change on a dime: just last week, the Trump administration was reportedly considering pre-deployment evaluations and US-China negotiations. However, without win-win technical solutions, policymakers either don’t act, or grab the easiest legible option (like a datacenter ban).

Take compute verification. Cheap, trustless attestation of training runs is the technology that makes a US-China deal possible -- without it, neither side can credibly commit to any mutual terms. Without prizes, these problems are orphaned: too applied for academia, too unprofitable for VCs, too technical for most philanthropy. They also span domains no single team holds in-house: cryptography, hardware security, international law.

Ensuring AI benefits Everyone

From redistributing wealth, to curing cancer, to protecting children, or animals, or even the AIs themselves, the subproblems for ensuring AI benefits everyone range from barely scoped (child safety) to well-mapped (biosecurity).

Where “invention” is the bottleneck, a similar prize paid on the first qualifying solution fits cleanly (Alzheimer’s, a provably-secure web stack). Where the bottleneck is deployment, manufacturing, or regulatory derisking, an advance market commitment (a purchase guarantee) fits better. The $1.5B pneumococcal AMC delivered three vaccines and saved 700,000 lives. Both are pull funding: pay on outcomes, let competitors organize around the rubric.

In biosecurity, threat models (natural/artificial pandemics, rogue actors) and promising approaches (PPE stockpiles, wastewater sequencing) are well-understood. A Warp Speed-style commitment to buy N95s could provide every household in the US with PPE without the poor CG biosecurity team having to figure out the logistics. Capitalism will provide.

Contrast this with “child safety” (or job displacement, or AI consciousness), where no similar playbook exists. For these, before a prize, the primary work of the foundations will be to convene experts and define the problem. We can imagine some clarifying problem decompositions (parasocial attachment, AI-generated CSAM) and accompanying technical solutions (robust LLM personas, better classifiers).

For these pre-paradigmatic fields, the foundation’s first dollars should go to experts themselves: pay them to publish threat models, candidate interventions, and potential rubrics. Like podcasting, the difficulty is asking good questions.

Implementation

Why are prizes so rare? My best guess is institutional incentives. Grantmakers, frankly, aren’t rewarded for impact -- they’re rewarded (or simply not fired) for deploying capital defensibly, such as to fancy institutions. They rarely have the technical expertise to write a good rubric, or the resources to hire experts who do; what they can do is sniff out which orgs seem credible to their networks.

The new capital coming online has much less baggage: generally everyone is making new hires, with few prior commitments. For the OpenAI Foundation in particular, the optimistic, ambitious vibes of a prize are much less awkward than funding civil society grantees or might publicize or undermine their for-profit arm. And talent! Talent and founder-level “capacity” is so much of a constraint. Well, applying to little-publicized “requests for proposals”, with bounded upside, is not a good way to attract ambitious founders. Even Dwarkesh is hiring his research collaborator, this round, with a blog prize, rather than a job posting.

And, ultimately, prizes can scale. I cannot imagine us building the capacity to evaluate $30bn/yr of individual grants. Let’s do some napkin math: that’s 6000 grants of $5mn, which today’s ~100 or so reasonably-shaped organizations cannot absorb. 20-30 high-leverage prizes cost $30bn/year -- a few in the billions, most in the hundreds of millions. Beyond the prize itself, the rest goes to providing competitors with compute, tokens etc. For example, compute verification may mean actually renting a datacenter for testing hardware proposals (if you know anyone who knows how I can get one, I’d love to hear about it).

And finally, “taste” is a rare, rare commodity. Traditional philanthropy asks one grantmaker to choose the problem, the team, and the approach. For prizes, you need taste only on problem identification, not on the hundreds of org-by-org bets that follow. The result is a field that defaults to whatever passes the in-group sanity filter — a large part of why AI safety has produced, charitably, modest progress.

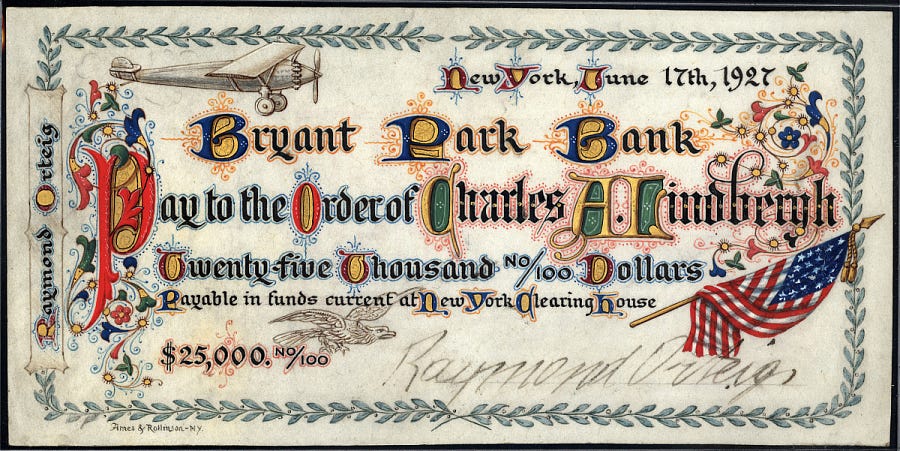

Prizes aren’t the whole answer. You cannot feed a researcher with the promise of a prize. While VC-like syndicates fronting researchers in exchange for a slice of the upside sound like libertarian fantasy (and it is, at smaller scales). I do think, though, for literal-billion-dollar prizes, the math works! The $10M Ansari X Prize pulled $100M+ in research spend across 26 teams.

Here’s a funny failure mode: what if the prizes are too big, and, like those investment bankers who retire early, what if the world’s best talent wins a few of the early prizes, and retire forever. I’m not actually concerned about this: I know the quants are in it for the love of the game, and the money just got them through the door

Some inputs, like accessing internal frontier models, may never be available. We won’t be able to democratize that. Long-horizon institution-building, weirder conceptual research, political lobbying will need different (probably traditional) vehicles.

Yet, from commercial aviation, to self-driving cars, to deep learning, well-scoped prizes have repeatedly cracked difficult sociotechnical problems. It’s no coincidence that the last serious attempt at deploying AI safety money at scale came to the same conclusion: I remember hearing rumors in 2022 about Leopold’s $1bn alignment prize proposal at the Future Fund. Let’s do that.

Thanks to Sophie Kim, Saheb Gulati, Arunim Agrawal, Jasmine Li and Richard Ren for feedback and discussion.

One case against some of the verifiable prizes you described above is that AIs will continue to get much better at the types of research where you can verify the outcome (https://blog.redwoodresearch.org/p/ais-can-now-often-do-massive-easy), and that AI labs will be in an amazing position to use their powerful AI labor on such tractable research problems. So, most human research efforts should potentially focus on areas where success isn't currently verifiable (and try to make it more verifiable / make direct progress in it instead)

Relatedly, an AI Alignment prize was a contending alternative to the OAI superaligment team.

From: https://www.newyorker.com/magazine/2026/04/13/sam-altman-may-control-our-future-can-he-be-trusted

> He added that he was thinking of committing a billion dollars to the issue, which many A.I. experts considered the most important unsolved problem in the world, potentially by endowing a prize to incentivize researchers around the world to study it. Although the graduate student had “heard vague rumors about Sam being slippery,” he told us, Altman’s show of commitment won him over. He took an academic leave to join OpenAI. But, in the course of several meetings in the spring of 2023, Altman seemed to waver. He stopped talking about endowing a prize. Instead, he advocated for establishing an in-house “superalignment team.”